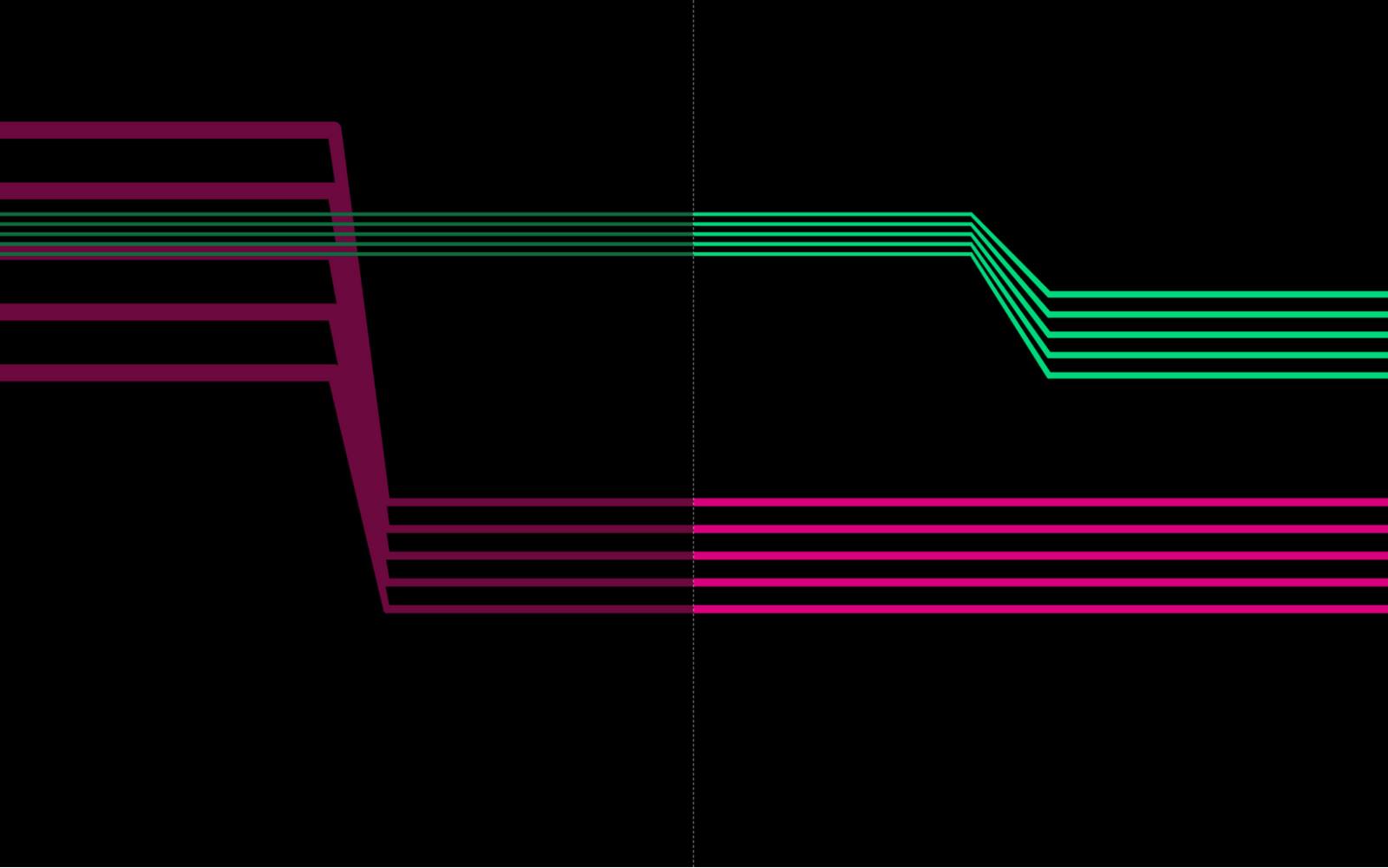

SYNTAX is an exercise in programming computers to program ourselves. The collaboration with Mike Hodnick AKA Kindohm includes four movements from me and four from Mike for a total of eight generative, animated, graphic scores. We follow the unpredictable yet familiar visuals making each performance similar, but distinct from the next.

The piece questions technological idealism in an age of ecological disruption and data-driven exploitation. By deliberately coding and submitting to an “inversion of control” we evoke the warnings of media theorists like Douglas Rushkoff, risking a future wherein our behavior might be irreversibly dictated by the algorithms in the software we use instead of by our own volition.

SYNTAX was performed at the Performing Media Festival on March 10, 2023 at LangLab in South Bend, Indiana and Tte next day in Kalamazoo, MI at the Dormouse Theatre. The video excerpt above was presented at NIME 2022, and we performed the piece in-person at the Internation Computer Music Conference (ICMC 2022) in Limerick July 2022, and at iDMAa 2022 Weird Media, June 2022.